In various schools and for various SENDCOs, there is too much or too little data, data that’s not well-shared or data that requires a degree to interpret. What follows hopes to guide you to know what you might look out for in terms of your context, your attendance, your behaviour/exclusions and your academic outcomes. Nothing replaces knowing your students and being responsive to their needs, but knowing the numbers will allow you to plan strategically and, in many cases, feel comforted about the job you are doing.

Context

Start with the context. You need to know what you’re looking at, in terms of SEND in your setting. The data-gathering bit of this is quite straightforward, as most of what you need to know here is reported to the DfE each year through the annual census. From the information that someone in your school has had to send off anyway, you’ll be able to see:

a) what types of SEND (SpLD, MLD) feature most prominently in your school.

b) to what extent boys do (or don’t) dominate on the SEND register

c) to what extent students who qualify for pupil premium feature (or not) on your SEND register

d) whether particular year groups dominate your SEND register.

Once you know this context, you can do a few things:

1. You can look at your other bits of data in more detail (i.e. is it my SEMH students who need to be my attendance focus?)

2. You can ask yourself questions that present from looking at your context, i.e.:

– do I need to be putting more resource into this particular year group?

– Do I need a greater whole-school training focus on SLCN?

– Do I need to look at a potential issue with under-identification of students with SpLD, and look at how to solve this?

– Do I need to push my Headteacher to employ a part-time counsellor, using the balance of my SEND register as evidence for this?

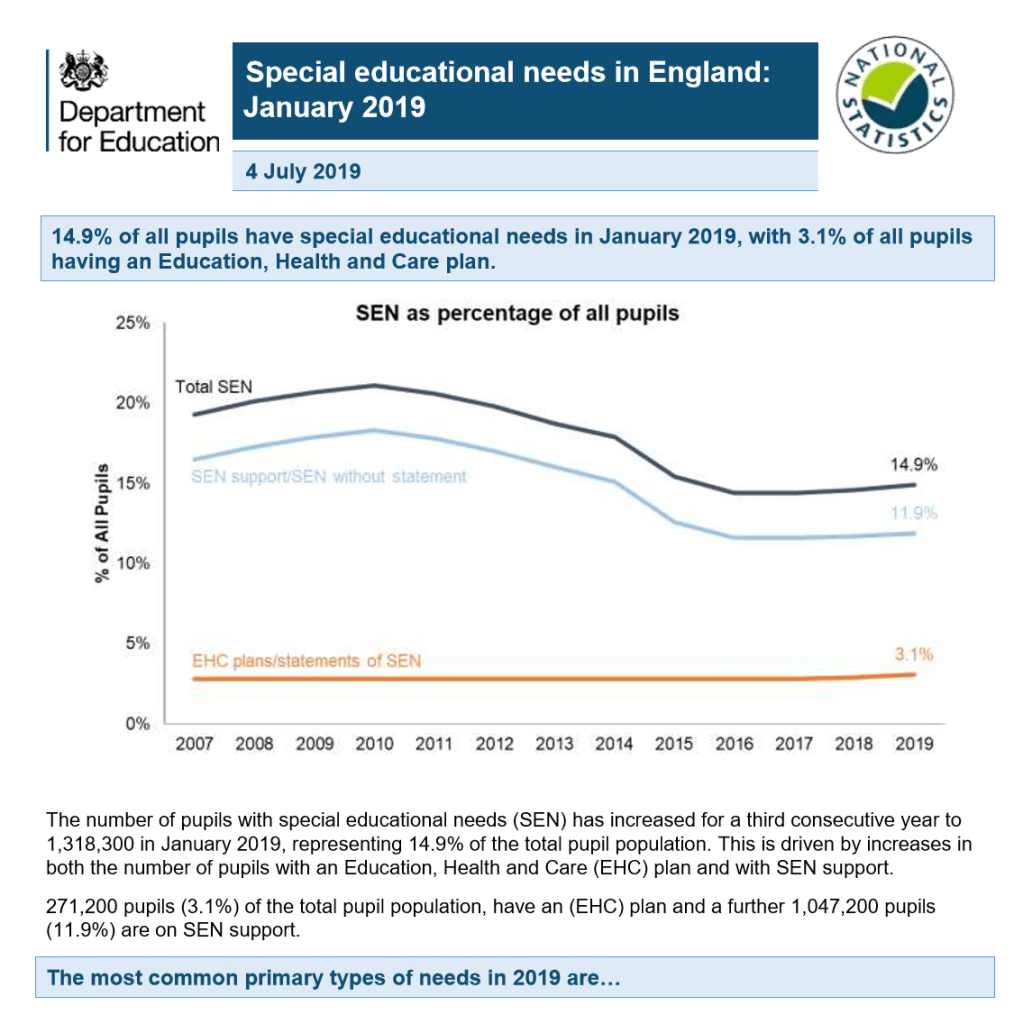

To really understand what the numbers mean it can be very useful to compare to national. This information is released every year by the DfE, in their ‘first statistical release’ for SEN. You can quickly see whether your context places you above or below national average, i.e. for students at SEN support, students with EHCPs, etc.

Attendance

Once you know your context, you can begin to look into the dataset for this group of students, starting with attendance. Your school attendance figures will be known by whoever is responsible for sending the DfE data each year; you may have a data manager who can give you the most up-to-date figure, and can set up a system where you are given this attendance data at the beginning of each week, month or half-term.

In England, there is a gap in attendance between those with SEND and those without, details of which can be found here. The gap is currently 4.4% for students with EHCPs and 2.2% for students at SEN Support, with national attendance for SEND being 91.3% and 93.5% respectively in England (as of 2018-19). This means, for you, asking:

1. Am I above or below national average?

2. Is the gap between attendance of SEND and attendance of those without SEND in my school above or below national average?

3. Is poor attendance of students with SEND explained by one or two students with a chronically low attendance, or is it indicative of a wider issue that needs to be addressed?

4. Do I, as the SENDCo, have actions in place to support poor attendance? Do students with SEND get support and/or sanctions from whole-school systems, aimed at supporting attendance, and do these systems work satisfactorily for students with SEND?

Behaviour, including exclusions

In England, the proportion of exclusions accounted for by pupils with SEN is 45% (DfE, 2017-18 data). As with attendance, data on exclusions is all reported through the census, and will be kept by someone in your school. When comparing with national data, you can ask yourself a similar set of questions to that which you asked about attendance:

1. In terms of students with SEND getting fixed-term or permanently excluded, am I above or below national average?

2. Is there a gap between the proportion of students with and without SEND getting an exclusion, and is this gap in line with national average?

3. Are poor exclusion figures for SEND explained by one or two students with several FTEs, or is it indicative of a wider issue that needs to be addressed?

4. Do I, as the SENDCo, have actions in place to support poor behaviour? Do students with SEND get support and/or sanctions for their behaviour, and do these systems work for students with SEND?

Your school is not obliged to release data on its own behaviour systems (which students are getting detentions, etc), but it may well keep these somewhere, allowing you to do some data analysis in a way that may be much more useful for you than exclusions data, which will only be for the more extreme cases. You will want to ask yourself if a student with SEND in your school picks up more sanctions than other students in your school, and – if so – ask yourself what work you can do to try and address this (i.e. targeted social skills work; a homework club; whole school work on tolerance and respect, etc).

Academic outcomes

You will certainly be expected to know your headline academic figures for SEND. Make sure you find these out if you don’t know them already. In primary, this will be your percentage of students with SEND:

i) achieving the Early Learning Goals by the end of reception

ii) passing the year 1 phonics screening

iii) passing the year 2 SATS

iv) passing the year 6 SATS

In secondary schools, this will be your Progress 8 and Achievement 8 scores in Key Stage 4 and equivalent scores in Key Stage 5.

In all settings, a comparison with national will make your understanding of your data much more informed. For example, Progress 8 scores for students with SEND in year 11 sit at around -0.61 (2018, England), which gives you a benchmark. This benchmark needs to be raised but is indicative of the current situation in terms of the progress of students with SEND. Often it is reassuring to discover that, in spite of frustrations, you have e.g. above national average outcomes in your phonics screening outcomes. SEND outcomes nationally are low but knowing the national data should make it easier for the SENDCo to recognise successes. Where you are below national in some areas, look at your 3-year trend; this may give you reason to be more positive. You can also make comparisons to the average for your Local Authority.

When looking at academic outcomes, you could go into almost endless levels of detail, i.e.

a. by subject area (do Maths do better than Science in terms of academic outcomes for students with SEND?)

b. by need type (do students with SLCN do better than those with MLD?)

c. by individual staff member (is there a teacher who gets lower outcomes for students with SEND, and has a training need?)

d. by individual child (are there students with SEND who are achieving so well that their need may have changed? Could that child come off the SEND register? Are there students who are not on the SEND register currently, but who are achieving at a very low level and should be considered for some assessment of SEND?)

In a small school, this level of detailed analysis will lack the numbers to be statistically significant, but will paint a picture on some level as to how students are achieving in your school. This level of detailed analysis will perhaps be reserved for the externally reported data, but there should be a system whereby you look at data from each data drop that takes place in your school – i.e. what can you learn from the year 5 mid-year tests, to help you to boost end of year outcomes?

Numbers are not the whole story

Finally, it should be said that numbers are only ever part of the story. There may be a child with 50% attendance who affects your figures but that is the most they can get into school currently, irrespective of intervention. You may have a child who achieves Entry Level qualifications at Key Stage 4 and does nothing for your performance tables, but has a real sense of achievement and goes on successfully to a college placement. Data is vital to a SENDCO doing their job well, as long as a sense of perspective and a moral compass go alongside.

This is so useful, thank you.

LikeLiked by 1 person

You’re welcome! Glad it’s helpful.

LikeLike